What if I tell you, in the Future, lines of code written by computer engineers will produce music. It seems pretty scary, right?

Imagining that a piece of software will make music and humans don’t have to participate in the process seems very “futuristic.” But before talking about the future, we’ll have to take a look at the history of Electronic Music Production to understand how an AI can make music.

History of Electronic Music Production

The first piece of music that was produced with only electronic sound was created in the 1950s in Germany. Before this, Musicians were using electromechanical instruments, which consists of strings, hammers, and electric pickups. But music produced by these instruments cannot be called solely “electronic music.”

After the invention of Theremin and synthesizers, the base of electronic music was formed. These devices purely used electronic sound and not the vibrating strings to produce the music.

Artificial intelligence will use the same concept of creating music from a purely electronic sound.

We’ll talk about this later, but first, let’s take a look at what Artificial Intelligence is and how a bunch of codes can make a piece of music.

What is Artificial Intelligence?

Artificial intelligence means the exploration of an “intelligent” problem-solving behavior and the creation of “intelligent” computer systems. Artificial intelligence is already an integral part of most software. In general, artificial intelligence tries to simulate decision-making structures similar to humans in a not clearly defined environment.

The most common examples of artificial intelligence we have seen in sci-fi movies are Jarvis from iron man and self-driving high tech space ships.

To understand how artificial intelligence will make musical melodies, drums, chords, and all the other essential musical elements first, we have to see how an AI works in general.

How does AI work?

AI is a cognitive system that learns through interactions and evidence-based answers that provide better results. Artificial Intelligence evaluates results from past recorded data and facts.

There are a bunch of pre-recorded data and facts called dataset. Dataset works as the input for the AI algorithm. The machine learning code extracts patterns out of the “RAW” data and helps to draw a conclusion out of it. The whole process may sound effortless, but it is quite complicated.

How can we create Art and Music with Artificial Intelligence?

Machine learning needs data to process any results. So if we provide relevant data as our data set, we can train our algorithm to make art pieces. To make art, AI doesn’t need some specific set of rules, all it needs is thousands of aesthetically pleasing images of art, and it will create new images from aesthetic it learned.

“Portrait of Edmond Bellamy” is a piece of art that was sold at a whopping $432,500. There are a plethora of AI artists out there who are creating mesmerizing art pieces.

Computer scientists and neural network experts want to take machine learning and artificial intelligence to the next level, where AI can make music without any human intervention.

Making Music with AI

As I mentioned earlier, Electronic music uses purely electronic sounds. There are small samples which, when strung together, make a sound sample. Different types of these sound samples are used throughout the process of making music.

There are 48 thousand samples per second in a sound sample. AI Algorithms can manipulate these sounds to make a unique sound sample.

AI can use these building blocks of sound to analyze thousands of songs and make its unique song out of it. All the AI software uses deep learning to analyze and then train their machine learning model.

AI can pick up the length of the notes, chords tempo, and how each note will interrelate with each other. You can select the mood of song, for instance, If you want to make an orchestral song, All the sounds will used will be orchestral sounds. There is an entire industry working around this specific field, including top companies like IBM Watson Beat, Google N Synth, Spotify’s Research Lab, Melodrive, and Amper Music.

Some of them provide MIDI files while some of them give stems of the different audio files, which can be later manipulated in DAW.

Let’s take a look at two of the most popular AI artists: Google’s N Synth and Amber Music.

Google Magenta’s N Synth

N Synth stands for “Neural” Synth. The neural synth is Google’s open-source research project, which explores the role of Machine learning-powered AI in the creative process of making art and music.

Google’s magenta studio has created a platform that can be used to generate sounds and samples with the help of Machine learning. You can add two different sound samples, and it can produce many different sound samples with different combinations.

At this moment, magenta comes with Ableton live plugin and stand-alone software for windows and Mac OS. Magenta can produce different sounds as samples, and you can then use the audio file to stack them over each other, or you can rearrange them in a DAW.

Amper Music.

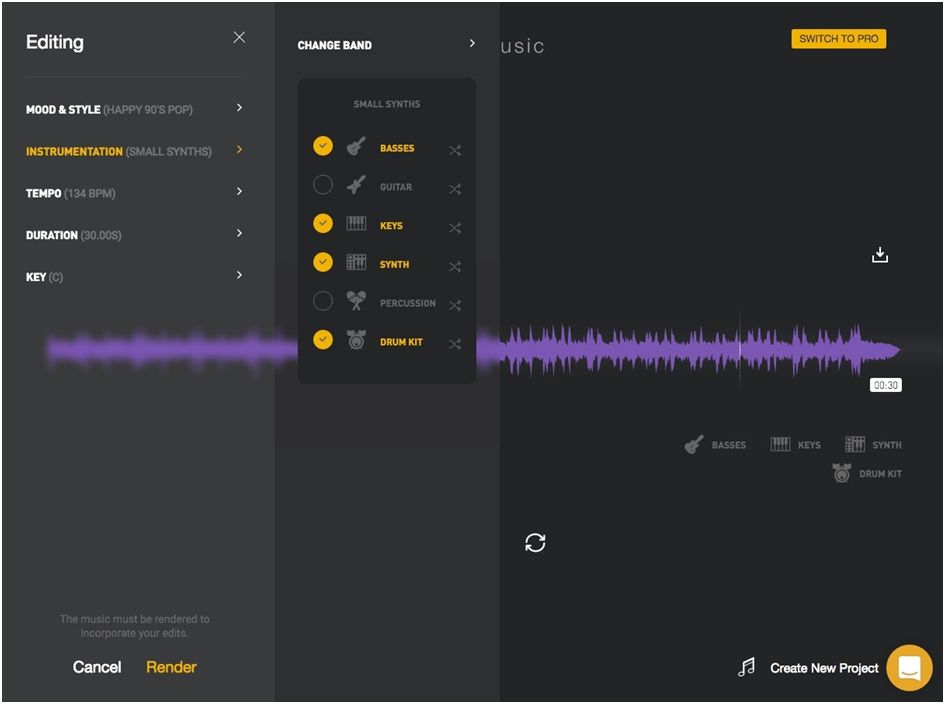

Ampermusic AI music production platform is the most promising among other platforms. Ampere’s easy to use makes it the most popular platform for making music effortlessly.

You have to just log on to their website, pick up a genre and mood, and that’s it. It’s that simple. It gives you audio files which you can change according to your need.

The music provided by Amper is not at all bad. It can be used in Youtube promotions or as the background music of a video you made. You can’t differentiate between “coded” music and “written” music.

Conclusion

For some music producers, the idea that a machine learning algorithm can make a song is very ‘spooky.’ Right now, AI is not good enough to make a song that can win an award at Grammys. But this is just the beginning of a revolution.

At this time, Artificial Intelligence can be used as a powerful tool to produce music. Still, in the future, AI is capable enough to replace human musicians but at a certain level. Humans have a sense of creativity, while AI is analyzing a bunch of songs and then creates a new song out of it.

So at this moment, all we can say that AI is a reliable tool for collaboration and speed up the process of making music.

I’m a writer and blogger. I’m a travel enthusiast as well. I love writing about stuff I love. I wish I could help others with my experiences and write-ups.